Don't use AccessiBe (And other automated follies)

- The Unbearable Inaccessibility of Tooling

- The Even More Unbearable Inaccessibility of Overlays

- Just hire an Accessibility Engineer

- A11y is FUNDamental

Hello, folks;

Today, I have for you something about which many accessibility experts (and I mean many) have written about, at length, before.

However, I'm writing this quick post about it for two reasons: 1) Neil Patel is the originator of the current discourse, and he's more well known in the SEO community, and 2) SEOs are less likely to read accessibility blogs (in general. Though if you're interested in the intersection of a11y and SEO, stop reading this and follow @JoeHall).

(My secret, third reason is: unless you're at the intersection of SEO and a11y, you're unlikely to know Neil Patel and AccessiBe's reputations both. Uh. If you know what I mean, allegedly, etc etc.)

You want an ADA compliant site; you want a site that is WCAG 2.0 or 2.1 AA compliant. You want users to be able to like, read your site. Neil Patel is over on Product Hunt recommending the aCe service and saying it's great. And you like Neil Patel, so you use the tool on your site. Here's the thing: if you want an accessible site, AccessiBe is not going to get you one. And it might (allegedly) (don't sue me) get you sued.

AccessiBe claims to be able to make your site compliant with ML and AI - Now again, maybe your alarm bells aren't ringing, because there is some SEO work that can be done with automation, and therefore there may be some a11y work that can be done with automation. But if someone came up to you and said "I've figured out a way to 100% SEO up a site using machine learning" you would know that wasn't true. Right?

There are two issues here: one is the inherent problem of using tools on accessibility problems. The other is the inherent problem of overlay tools.

The Unbearable Inaccessibility of Tooling #

You can run through a site with Lighthouse or another Accessibility auditing software and it will give you some helpful hints. I really like the WAVE tool. or the Deque axe extension. But there are tons of things an automated checker can't get; is your language accessible? A screen reader might be able to navigate your content, but is it a pleasant experience? Do you use alt text that doesn't just "make sense", but is like... good?

This is the problem with automating and toolifying a lot of audits; while they do and will and can get better, in the end there are things that humans can see that machines can't.

The Even More Unbearable Inaccessibility of Overlays #

I'm linking here to the direct part of the video where Karl Groves, an accessibility expert, talks about the success rate of these Overlay Accessibility tools. These tools are supposed to "fix accessibility issues" in "the click of a button/one line of code/6 payments of $29.95." Fundamentally, it's bunk, but here's an example.

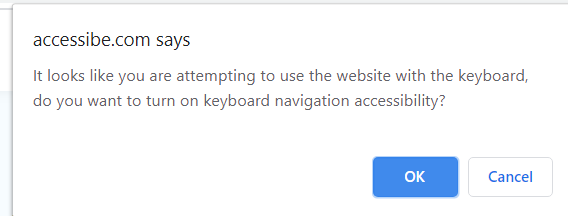

The AccessiBe (which I just almost typo-ed as inaccessiBe; make of that what you will) site loads with insufficient color contrast between the background and foreground, making it difficult for low vision users to read and navigate the page. On top of this, some roles don't have necessary ARIA attributes. This is stuff automated tag checkers could pick up, but there's also a pretty annoying user experience for users who have motor control issues or use screen readers. It kind of seems like the product was designed to tick the "biggest" accessibility boxes, without taking into consideration what users might actually need: an attempt to ensure you're within the letter of the law, but not the spirit.

The way it works is to overlay "modes" for users. This is pretty unnecessary-- especially because building in accessibility from the beginning has better dividends for everyone.

Just hire an Accessibility Engineer #

Look: just have a human person who knows what they're looking at look at your site. Bake in accessibility from the beginning. Format your site logically, and structure your HTML well.

Okay: hiring an accessibility engineer may be outside your budget, but at least running a screen reader test and going through your site with your keyboard can go a long way. Think about it like this; you want people to be able to visit your site and read your content. This is just another way to do that.

Test a representative sample of your pages and apply the fixes you find there to all pages of that type.

A11y is FUNDamental #

Automated testing can test for only a few best practices for WCAG 2.0, and automatic fixing isn't even reliable for fixing those things.

Here are some other reads:

- Screen Reader User Survey Results

- Testing with Screen Readers

- The Underlying Truth about Overlays

- The Underlying Truth about Overlays

- #accessiBe Will Get You Sued

- Web Accessibility Overlays Don't Work

- Automated Lies, with One Line of Code

Peace.